Why Your Facebook Ads Get Rejected (And How to Fix It Fast)

TL;DR

Most rejections are process failures, not “bad luck.”

Creative, landing page, and account context must align.

A pre-submit validation workflow dramatically reduces rejection loops.

Fast fixes require root-cause classification, not random edits.

Stable ad approval is an operations system, not a one-time tactic.

Quick Definition

A Facebook ad rejection means Meta’s review system found a mismatch between your ad content, targeting intent, landing page behavior, or account-level risk profile and current ad policies. The practical objective is to build a repeatable approval system that prevents predictable rejection patterns before submission.

Why Rejections Keep Happening to Good Teams

Many teams assume rejections only happen when ads are “aggressive” or clearly non-compliant. In practice, rejection risk often comes from weak operational consistency: one person changes copy, another swaps the landing page, a third duplicates an old campaign with outdated claims.

Counterargument: “Meta review is inconsistent anyway, so process only helps a little.”

Trade-off: yes, review outcomes can vary at the edges. But teams with strong pre-submit controls still see fewer repeat failures and faster recovery when rejections happen.

Edge case: highly regulated industries can still get flagged despite careful wording because surrounding context (audience claims, page framing, implied promises) increases sensitivity.

Concrete scenario: a team writes compliant ad copy but links to a page with stronger, unqualified claims than the ad itself. The ad gets rejected not because the copy is catastrophic, but because ad-message and destination-message do not match.

Common misconception: approval depends only on ad text. In reality, approval evaluates the full experience.

Takeaway: Rejection prevention starts with workflow alignment, not heroic last-minute edits.

Takeaway: “Compliant copy” is insufficient if destination and intent are misaligned.

The CLEAR Framework for Approval Stability

Category 1: Campaign Workflow Tools

Draft -> Review -> Scheduled enforcement

Approval status visibility

Version control across ad variants

Category 2: Policy QA Tools

Claim-risk checklist integration

Landing-page consistency checks

Rejection reason logging quality

Category 3: Performance + Compliance Ops

Incident-to-fix traceability

Team handoff clarity

Repeat-failure detection support

Counterargument: “Manual review docs are enough.”

Trade-off: manual docs can work for low volume; at scale they fail under launch pressure.

Edge case: solo operators can stay lightweight if they maintain strict naming, review cadence, and rejection logs.

Concrete scenario: a team tags each campaign with rejection reason categories. After one quarter, they identify recurring landing mismatch as the top failure and redesign the workflow.

Common misconception: tools replace policy understanding. Tools enforce discipline; judgment remains human.

Takeaway: Choose tools that preserve compliance memory across team changes.

Takeaway: Visibility beats guesswork during rejection recovery.

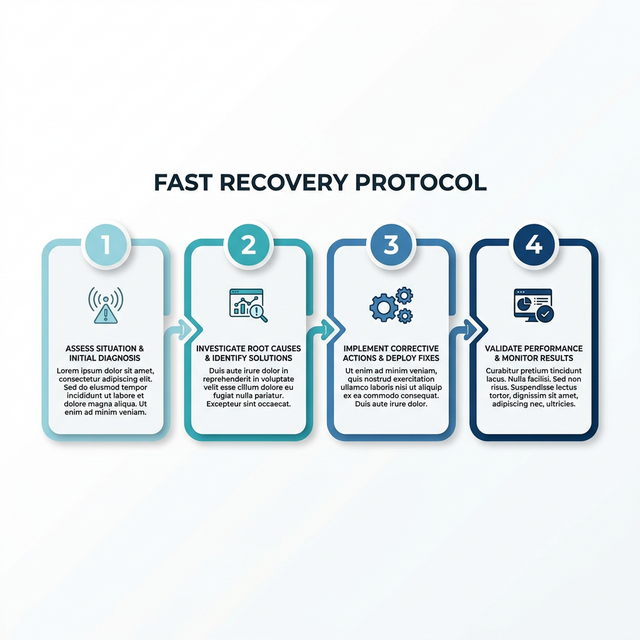

Fast Recovery Protocol (When an Ad Is Rejected)

The 4-step Fast Recovery Protocol designed to restore ad delivery rapidly without triggering further account restrictions.

Step 1: Freeze Random Edits

Do not change five variables at once.

Step 2: Classify Rejection Type

Map to one primary category: claim, audience framing, creative, landing, or account pattern.

Step 3: Align Ad + Landing

Check wording symmetry and implied promises.

Step 4: Replace High-Risk Language

Use precise, non-absolute wording.

Step 5: Submit Controlled Variant

Change minimal variables to isolate what resolved the issue.

Step 6: Log Decision

Document cause, fix, and safe future template.

Counterargument: “In a launch crunch, we should just rewrite everything and resubmit.”

Trade-off: full rewrites may pass faster occasionally, but they reduce learning quality and repeatability.

Edge case: if rejection reason is unclear after one controlled revision, escalate to broader rewrite with explicit policy guardrails.

Concrete scenario: team changes only headline and page intro alignment. Approval succeeds. They now know mismatch, not offer, caused the failure.

Common misconception: more edits means better chance of approval.

Takeaway: Controlled iteration resolves issues faster than panic rewriting.

Takeaway: Every rejection should produce a reusable process improvement.

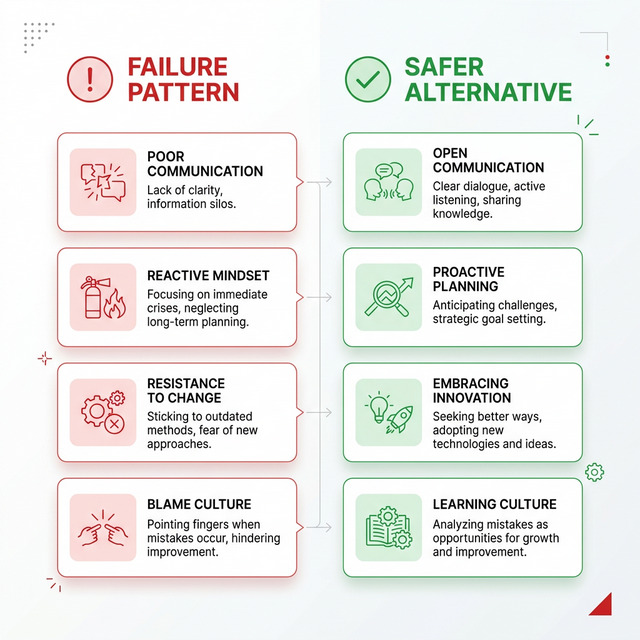

Common Failure Patterns (and Safer Alternatives)

Identifying high-risk failure patterns in ad copy and visuals alongside their safer, compliant alternatives.

Failure Pattern 1: Promise-first copy

Risk: implies guaranteed transformation.

Safer alternative: evidence-aware, bounded outcomes.

Failure Pattern 2: Emotional pressure framing

Risk: perceived manipulative intent.

Safer alternative: neutral benefit framing.

Failure Pattern 3: Landing drift

Risk: policy mismatch between ad and destination.

Safer alternative: mirrored message architecture.

Failure Pattern 4: No ownership map

Risk: compliance tasks fall through handoffs.

Safer alternative: single approval owner per campaign.

Failure Pattern 5: Rejection memory loss

Risk: same mistakes repeated across quarters.

Safer alternative: rejection library + safe template system.

Counterargument: “Strict templates reduce creativity.”

Trade-off: rigid templates can constrain novelty, but they reduce avoidable policy risk. Best approach: controlled creative freedom within policy-safe scaffolds.

Edge case: high-concept creative campaigns may need broader experimentation—but should still pass through compliance framing rules.

Concrete scenario: team keeps creative variety but enforces policy-safe CTA patterns and landing consistency checks. Performance remains strong while rejection rates drop.

Common misconception: compliance means bland advertising.

Takeaway: Compliance boundaries can improve clarity, not kill creative quality.

Takeaway: Predictable systems free time for better creative testing.

Governance Model for Teams

Minimum structure for stable approval outcomes:

campaign owner

compliance reviewer

landing page owner

escalation path for ambiguous cases

weekly rejection review

Counterargument: “Small teams cannot afford this structure.”

Trade-off: small teams cannot afford repeated rejection chaos either. Roles can be lightweight but still explicit.

Edge case: solo operators can run role-switching by sequence (creator mode, reviewer mode, submit mode).

Concrete scenario: assigning one “final reviewer” before submit cuts accidental policy drift from rapid edits.

Common misconception: governance is overhead. In ad operations, governance is quality control.

Takeaway: Clear ownership reduces policy drift under pressure.

Takeaway: Weekly review turns failures into compounding advantages.

Free Tools (Quick Links)

Facebook Ad Rejection Checker — Quick pre-submit scan to catch common wording and structure risks.

Ad-Landing Consistency Checker — Compare ad promise vs landing message for mismatch risk.

Policy-Safe Headline Generator — Generate alternative headlines with safer claim framing.

Campaign Approval Checklist — Standardized Draft -> Review -> Scheduled gate for teams.

FAQ

Why do Facebook ads get rejected even when copy looks fine?

Because review evaluates message context, targeting implications, creative cues, and destination consistency—not just isolated text.

Should we duplicate an approved ad and assume safety?

No. Variant context can change risk profile, especially when destination or framing changes.

What is the fastest way to fix a rejected ad?

Classify root cause first, then make controlled edits tied to that category.

Is rejection prevention mostly a legal task?

No. It is primarily a workflow and quality-control task with policy awareness.

Can small teams reduce rejections without heavy tools?

Yes—by enforcing simple checklists, explicit ownership, and rejection logs.

How often should teams review rejection patterns?

Weekly for active teams; less frequent schedules increase repeat-error risk.

Conclusion

Facebook ad rejection is not just a policy issue—it is an operations quality signal. Teams that align claims, landing context, and approval governance can reduce rejection loops and recover faster when flags occur.

The objective is not “never get rejected.” The objective is to build a system where rejections are rare, diagnosable, and quickly fixable.

Key Takeaways

Approval depends on the full ad experience, not ad text alone.

CLEAR framework improves pre-submit stability.

Root-cause classification prevents random fix cycles.

Governance and logs turn failures into repeatable improvements.

Compliance discipline supports velocity and performance.

Audio Version

Audio Version