TL;DR

Strong moderation is a trust system, not a reply-count game.

You rarely need to “win” arguments; you need to protect public trust.

The right response sequence can de-escalate hostility and increase credibility.

Teams fail mostly from routing chaos, inconsistent tone, and weak escalation logic.

Community quality compounds when governance, training, and review loops are explicit.

Quick Definition

Community management is the operating discipline that converts public interactions into trust outcomes through consistent response logic, clear boundaries, and measurable quality standards. “Turning trolls into loyal fans” is less about persuading every hostile commenter and more about demonstrating fairness, competence, and emotional control in public.

Community management defined: the operating discipline that converts public friction into measurable brand trust through consistent response logic and clear boundaries.

Why This Matters More Than Most Teams Think

In social channels, your reply is not only for the person attacking you. It is for the silent majority reading without commenting. That audience decides whether your brand feels trustworthy based on how you handle pressure.

Counterargument: “Trolls are not convertible, so engagement with them is wasted effort.”

Trade-off: some bad-faith actors are indeed non-convertible. But public response quality still matters because bystanders evaluate your standards through those moments. Even when the troll does not change, audience trust can.

Edge case: in harassment-heavy threads, over-engagement can amplify harm. In those situations, rapid boundary-setting and controlled disengagement can be better than prolonged debate.

Concrete scenario: a brand receives an aggressive complaint with partial truth. Instead of defensive language, the team acknowledges the valid part, clarifies the missing context, and offers a next step. The original commenter stays difficult, but the thread is perceived as fair and professional by everyone else.

Common misconception: moderation success means no negative comments. In reality, success means consistent, credible handling of inevitable friction.

Takeaway: Public comment handling is reputation infrastructure.

Takeaway: Audience trust grows from visible consistency under pressure.

The CALM-TRIAGE Framework

Use CALM-TRIAGE to reduce emotional reactivity and improve outcomes:

The CALM-TRIAGE framework breaks community moderation into repeatable steps — from classifying intent to governance logging — turning reactive responses into a scalable system.

C — Classify intent

Is the comment constructive criticism, frustrated confusion, performative provocation, coordinated trolling, or policy-violating abuse?

A — Acknowledge valid signal

Recognize any legitimate concern briefly and specifically.

L — Limit boundary violations

Set clear boundaries for abusive language, misinformation repetition, or harassment.

M — Move to resolution path

Offer a concrete next step: correction, support route, or close-with-boundary.

TRIAGE layer (operational)

T: thread risk level (low/medium/high)

R: required responder role

I: intervention type (public clarify / DM / remove / escalate)

A: accountability owner

G: governance note in incident log

E: evaluation in weekly review

Counterargument: “This is too heavy for social speed.”

Trade-off: yes, structure adds discipline overhead. But without it, teams waste far more time in repeat conflict and inconsistent decisions.

Edge case: small teams can run a lighter version with two risk levels and one escalation owner, as long as routing remains explicit.

Concrete scenario: a team tags incoming threads by risk before response. High-risk threads route to senior reviewer, low-risk threads follow standard templates. Response quality improves while stress drops.

Common misconception: frameworks make communication robotic. Good frameworks free people from panic decisions.

Takeaway: CALM-TRIAGE turns moderation into a repeatable capability.

Takeaway: Fast moderation is structured moderation, not improvised moderation.

What Actually Creates Hostility Loops

Most “troll spirals” are not caused by one comment. They are caused by system weaknesses:

Inconsistent voice across responders

No boundary language standard

Unclear ownership during escalation

Public replies disconnected from support resolution

No post-incident learning loop

Counterargument: “Some communities are just toxic; process won’t help.”

Trade-off: baseline toxicity varies by niche, yes. But process quality still influences escalation probability, perceived fairness, and bystander trust.

Edge case: politically charged or identity-heavy topics may require stricter moderation thresholds and quicker escalation to policy enforcement.

Concrete scenario: same issue appears in three threads. One responder apologizes, another argues, a third deletes comments. The inconsistency fuels accusations. After introducing response standards, issue recurrence stabilizes.

Common misconception: toxicity is mostly external. Often it is amplified by internal inconsistency.

Takeaway: Troll loops are usually process loops.

Takeaway: Consistency is a stronger trust lever than rhetorical cleverness.

Response Design: What to Say, When, and Why

Step 1: Mirror concern, not aggression

Use concise acknowledgment: “You’re right to flag this part.”

Step 2: Clarify scope

State what is true, what is incomplete, and what action is possible.

Step 3: Set boundary if needed

“Happy to continue if we keep it respectful and specific.”

Step 4: Offer a next step

Give route, owner, and expected response window.

Counterargument: “Firm boundaries make brands look cold.”

Trade-off: overly harsh boundaries can feel defensive, but no boundaries invite thread collapse. Calm precision usually balances empathy and control.

Edge case: repeated personal attacks should move quickly from warning to enforcement to protect community safety.

Concrete scenario: a user posts repeated insults after two clarifications. The team gives one final boundary message, then applies policy. Bystanders read the sequence as fair, not arbitrary.

Common misconception: longer replies are always better. Quality and direction matter more than length.

Takeaway: Effective moderation language is specific, bounded, and directional.

Takeaway: A clear “what happens next” line reduces conflict repetition.

Tool Evaluation Rule (3 Categories × 3 Criteria)

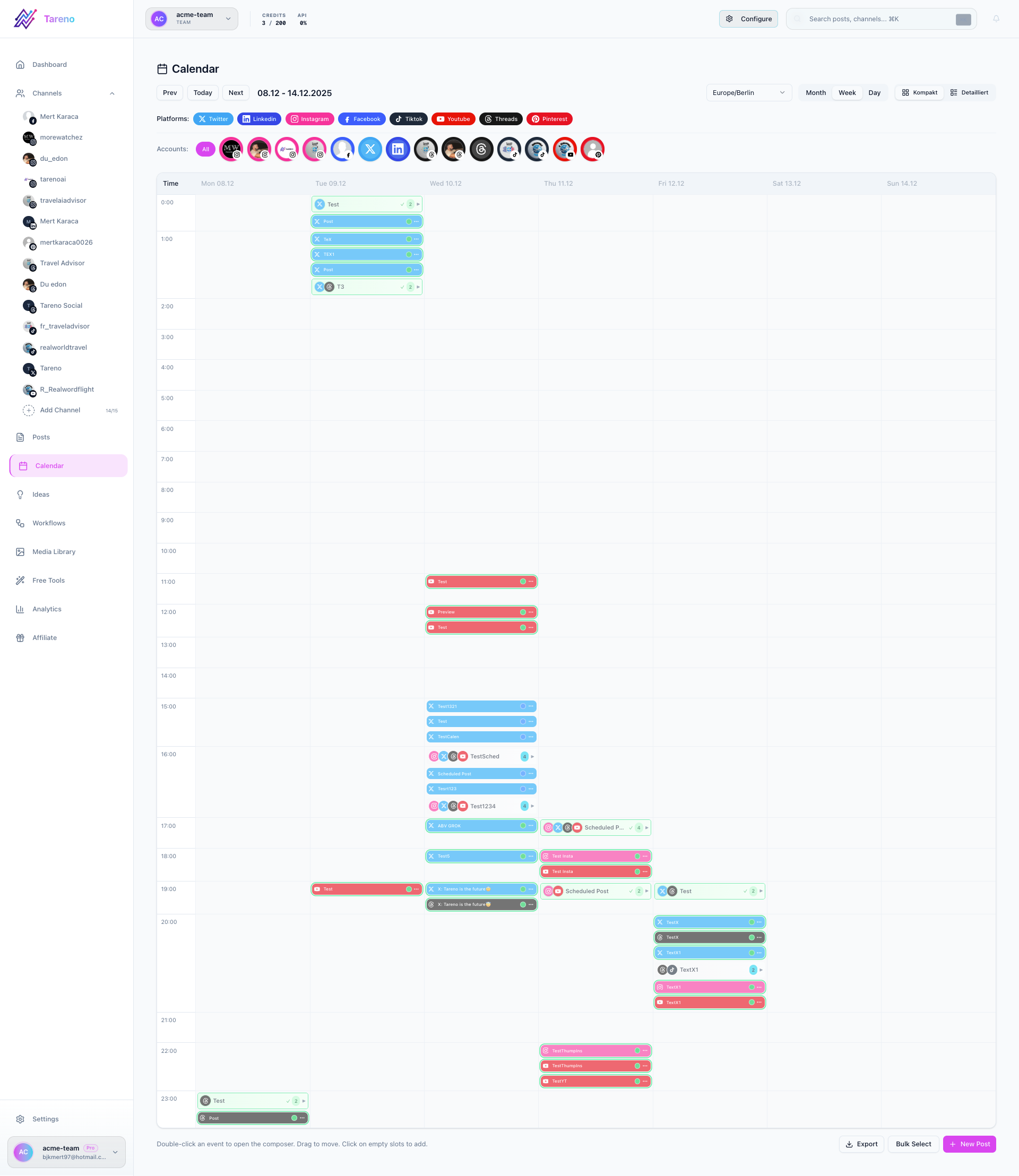

Category 1: Comment Operations

inbox triage clarity

thread ownership assignment

context continuity across shifts

Category 2: Governance Workflow

Draft -> Review -> Scheduled enforcement for sensitive replies

Approval status visibility

escalation routing reliability

Category 3: Quality & Risk Tracking

incident log traceability

repeat-pattern detection

weekly review readiness

Counterargument: “A shared inbox and good instincts are enough.”

3×3 tool evaluation framework for community management: scoring platforms across comment operations, governance workflow, and quality-risk tracking criteria.

Trade-off: at low volume, maybe. At scale, instinct-only moderation produces inconsistency and legal/reputation exposure.

Edge case: solo creators can stay lightweight if they still keep a simple risk rubric and review cadence.

Concrete scenario: team adds approval states for high-risk replies. Escalation quality improves because senior review happens before public posting, not after damage.

Common misconception: tools solve moderation quality. Tools only enforce discipline you already designed.

Takeaway: Choose tools that protect continuity and accountability.

Takeaway: Moderation memory is a strategic asset.

Escalation Matrix (When to Go Public, Private, or Remove)

Keep public when:

clarification benefits broader audience

issue can be solved without personal data

tone is tense but still manageable

Move private when:

account-specific details are needed

legal/payment identity issues appear

trust recovery requires longer context

Remove/limit when:

harassment, hate, threats, or policy-violating abuse appears

repeated disinformation is intentionally amplified

Counterargument: “Public correction always demonstrates transparency.”

Escalation matrix for community managers: a structured decision framework for when to respond publicly, move to DM, or remove and enforce — protecting brand trust under pressure.

Trade-off: transparency matters, but some issues become performative and unresolvable in public. Private resolution can protect both speed and dignity.

Edge case: high-visibility false claims may require both: brief public correction + private follow-up.

Concrete scenario: brand posts a concise public correction and moves account-specific details to DM. Thread heat decreases while clarity remains visible.

Common misconception: deleting toxic comments always looks censorious. Consistent policy application usually reads as responsible moderation.

Takeaway: Escalation should be documented, not improvised.

Takeaway: Good moderation balances transparency with harm reduction.

Metrics That Actually Matter

Stop measuring only reply volume. Track:

Abstract dashboard representing the community management metrics that actually matter: sentiment trend, response quality, and resolution rate — not just comment volume.

Resolution quality rate (threads closed with clear next step)

Trust recovery signal (users returning with neutral/positive tone)

Boundary compliance rate (fewer repeat violations per incident)

Responder consistency score (tone/routing alignment across team)

Escalation precision (right issues escalated at right time)

Counterargument: “These metrics are subjective.”

Trade-off: some qualitative interpretation is unavoidable, but structured scoring still outperforms vanity counts.

Edge case: small teams can start with two metrics (resolution quality + consistency) before expanding.

Concrete scenario: after introducing weekly thread scoring, a team sees that fast replies are high but closure quality is low. They adjust templates and improve outcomes.

Common misconception: speed is the top KPI. In conflict threads, clarity and closure quality drive trust.

Takeaway: Quality metrics prevent “busy moderation” with poor outcomes.

Takeaway: What gets measured becomes repeatable.

14-Day Community Stabilization Plan

Days 1–3: System setup

define categories

define boundaries

assign owners

Days 4–7: Live deployment

run CALM-TRIAGE on all incoming threads

document high-risk cases

Days 8–10: Pattern review

identify repeat triggers

tighten templates and escalation points

Days 11–14: Calibration sprint

coach responders on weak patterns

update decision matrix

lock v1 playbook

Counterargument: “Two weeks is too short.”

14-day community stabilization plan: a structured recovery schedule for brands rebuilding consistency and audience trust after periods of sustained negative engagement.

Trade-off: full maturity takes longer, but two weeks is enough to remove obvious chaos and establish control.

Edge case: high-volume channels may need parallel moderation support during rollout.

Concrete scenario: team running this plan reduced duplicate escalations and improved thread closure consistency in one cycle.

Common misconception: playbooks should be finalized before deployment. Operational playbooks improve fastest through measured iteration.

Takeaway: Start with workable standards, then refine with evidence.

Takeaway: Stability comes from cadence, not one-time documents.

Free Tools (Quick Links)

Comment Risk Classifier — Tag comments by risk level before replying.

Escalation Matrix Builder — Define when to reply publicly, move to DM, or enforce policy.

Response Template Studio — Build calm, bounded, and directional response starters.

Weekly Moderation Review Sheet — Score closure quality and consistency across responders.

FAQ

Can trolls really become loyal fans?

Sometimes. But the larger win is trust growth among silent observers.

Should every negative comment receive a long response?

No. Use proportional responses with clear direction and closure.

When should we move to DM?

When account-specific details, legal context, or repetitive hostility reduce public resolution value.

Should brands ever delete comments?

Yes—when comments violate policy boundaries. Consistency and transparency matter.

What is the first metric to track?

Start with resolution quality rate and responder consistency.

How often should teams calibrate moderation standards?

Weekly for active channels; less frequent review increases drift risk.

Conclusion

Great community management is not about appearing perfect. It is about being reliably fair, clear, and controlled when pressure rises. Brands that operationalize moderation through classification, boundaries, and review loops build trust that compounds across campaigns.

Key Takeaways

Community trust is built in conflict moments, not only in positive comments.

CALM-TRIAGE improves response quality and team consistency.

Escalation rules should be explicit and documented.

Quality metrics beat vanity moderation counts.

Structured moderation protects both brand trust and team wellbeing.

Audio Version

Audio Version