TL;DR

Leaked TikTok documents are useful as directional evidence, not as a permanent ranking formula.

Predictable growth comes from a repeatable test loop: hypothesis, controlled variant, measurement window, decision.

The strongest practical signal bundle is retention quality + value depth (saves, shares, meaningful comments).

Teams should evaluate videos in batches, not one-offs, to avoid emotional overreaction.

A stable workflow beats trend-chasing: clear promise, sharp opening, structured payoff, weekly diagnostics.

Quick Definition

The TikTok algorithm is a recommendation system that predicts which video a specific viewer is likely to consume and act on. “Leaked docs” are internal-style operational hints that can improve your testing strategy, but they cannot replace current account-level data.

Why the “Leaked Docs” Conversation Matters

Most teams sit in one of two unhealthy extremes. One group treats leaks as conspiracy content and ignores them. The other treats leaks as an exact engineering blueprint and overfits to old assumptions. Both positions create poor decisions.

The useful position is operational: treat leaked material as a hypothesis source. If a leak suggests a behavior pattern (for example: retention drops after weak openings), turn that into a controlled test and measure with your own audience.

Mini-example:

If a leaked source implies early hold matters disproportionately, publish a 4-video batch with identical topic and format, but different opening structures. Compare first-3-second hold and 25% watch-through before making a workflow decision.

What Is Likely True vs What Is Usually Misread

Likely true

TikTok optimizes for viewer satisfaction signals, not creator entitlement.

Early relevance affects whether a clip expands into broader distribution waves.

Topic clarity improves audience matching and downstream engagement quality.

Commonly misread

Exact signal weights (nobody outside the platform can rely on fixed weights).

Viral myths such as “one posting time hack changes everything.”

Isolated metric worship (for example, maximizing completion while saves and shares collapse).

Decision rule: if a tactic improves your own outcomes across multiple batches, keep it. If it works once and then disappears, treat it as noise.

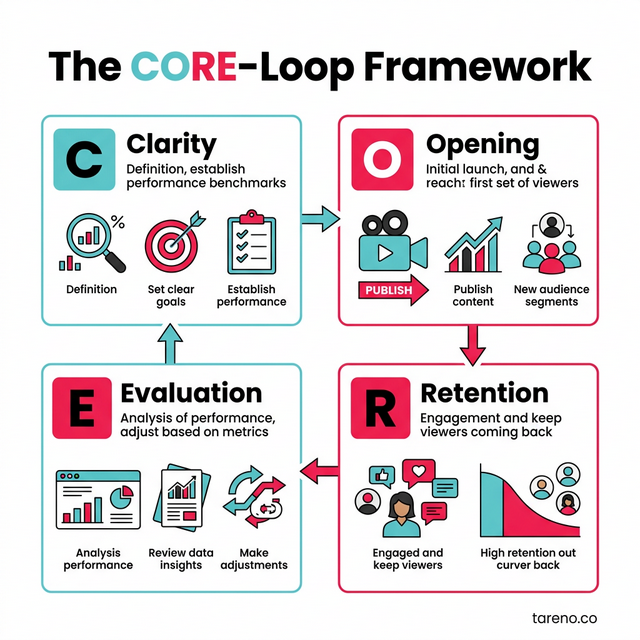

CORE-Loop: A Practical Framework for Non-Random TikTok Growth

The CORE-Loop Framework for TikTok

Use one framework end-to-end so the team does not improvise each post from scratch.

C — Clarity

Define one audience, one pain point, and one promised outcome per video.

Mini-example:

Weak: “How to grow on social media.”

Strong: “How B2B founders can write a 9-second TikTok hook that avoids generic intros.”

O — Opening Architecture

The first seconds should establish relevance instantly: audience cue + problem cue + promised payoff.

Mini-example:

“Your TikTok retention dies before second four because your first line is broad. Here is the exact fix.”

R — Retention Design

Retention is engineered through pacing and payoff sequencing, not luck.

Mini-example:

Insert a concrete proof moment by second 7 (before/after script line, metric screenshot, or visual contrast) instead of delaying value.

E — Evaluation Loop

Review in fixed cycles (weekly), not post-by-post panic.

Mini-example:

Track per format: 3s hold, 25% watch-through, completion tendency, saves, shares, comment quality, profile visits.

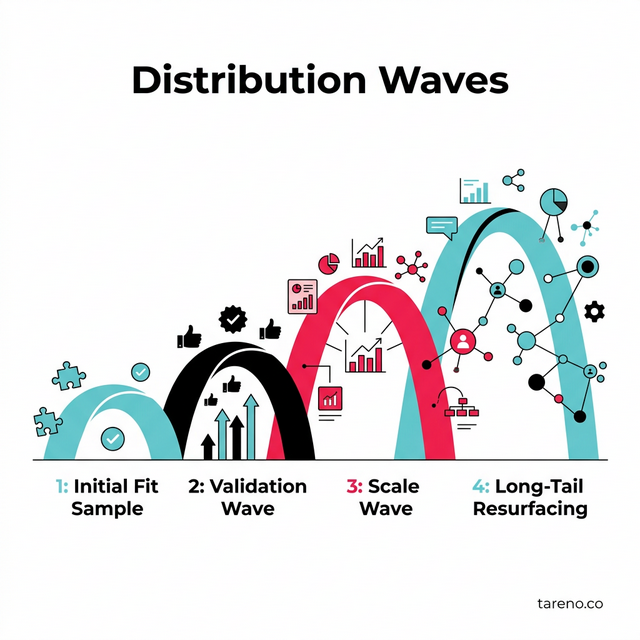

How Distribution Usually Expands in Waves

TikTok Distribution Waves Model

TikTok generally expands distribution in stages. Labels differ internally, but the pattern is consistent enough for practical planning.

Initial fit sample

A smaller audience sample tests immediate relevance and watch behavior.Validation wave

If retention and interaction quality hold, the video reaches broader but still adjacent segments.Scale wave

Strong clips can expand into larger pools where shares and saves often determine durability.Long-tail resurfacing

Topic-aligned clips can re-enter distribution later if user behavior remains positive.

Trade-off:

Over-optimizing for broad reach can weaken niche trust. For B2B or expert accounts, depth quality may outperform pure top-of-funnel reach.

Signal Stack: What to Prioritize Without Pretending You Know Exact Weights

You do not need secret ranking math to improve outcomes. You need a practical priority stack.

Tier 1: Retention quality

early hold

mid-video stability

completion tendency for the given length band

Tier 2: Value depth

saves (future intent)

shares (social utility)

meaningful comments (interpretation, questions, application)

Tier 3: Context fit

topic consistency across recent posts

on-screen text alignment with spoken message

caption language matching search and viewer intent

Edge case:

Some entertaining clips produce good completion but low saves and low profile actions. That can look successful while contributing little to business objectives.

A Reliable Test Method for Leak-Driven Hypotheses

Step 1 — Write one clear hypothesis

Example: “Specific audience-labeled openings improve early hold for workflow content.”

Step 2 — Freeze non-test variables

Keep topic, length bracket, posting rhythm, and CTA class stable.

Step 3 — Produce controlled variants

Run 3–5 variants with one deliberate difference (opening framing, visual pace, proof timing).

Step 4 — Measure in fixed windows

Evaluate at 48h and 7d to avoid premature conclusions.

Step 5 — Decide with thresholds

Keep only patterns that repeat across batches; archive failures with notes.

Mini-example:

If “audience-labeled opening” beats generic opening across three batches on 3s hold and 25% watch-through, codify it into the script template.

When Leaks Help vs When They Hurt

Leaks help when

your team lacks structure and needs testable hypotheses

you are building a repeatable format system

you document outcomes and update templates

Leaks hurt when

you copy tactics without audience fit

you avoid checking your own diagnostics

you use leak talk to justify low-quality fundamentals

Misconception to remove:

“Algorithm understanding is enough.”

It is not. Packaging quality and topical utility still decide whether viewers stay and act.

Common Failure Patterns in TikTok Teams

1) Hook says one thing, body delivers another

Fix by matching the first promise with a concrete payoff in the first third.

2) One video tries to solve three problems

Fix by reducing each clip to one clear decision outcome.

3) Visual pace does not match information density

Fix by reducing filler transitions and adding proof moments sooner.

4) No format memory

Fix by maintaining a format library with observed outcomes and constraints.

5) Emotional analytics

Fix by adopting batch-level decisions and predefined stop/keep thresholds.

Tool Stack That Supports the Workflow

TikTok Creative Center [SOURCE: https://ads.tiktok.com/business/creativecenter]

Use for topic/creative benchmarking and pattern discovery.CapCut [SOURCE: https://www.capcut.com]

Use for fast variation tests in openings, pacing, and proof placement.Notion [SOURCE: https://www.notion.so]

Use as a controlled test log (hypothesis, variant, window, decision).Google Sheets [SOURCE: https://workspace.google.com/products/sheets/]

Use for signal tracking and trend comparison across format batches.

FAQ

Do leaked TikTok docs still matter in 2026?

Yes, as directional evidence. They are useful to generate better hypotheses, but your current audience response is the real decision source.

Is completion rate more important than comments?

Neither should be isolated. Completion indicates flow quality; comments indicate interpretation depth. The healthiest clips often combine both with saves or shares.

Can small accounts still get strong reach?

Yes. TikTok still evaluates clip-level quality. Smaller accounts win when topic positioning is specific, openings are immediate, and format quality is consistent.

How many posts per week are realistic for lean teams?

A sustainable baseline is usually three to five high-quality posts weekly, then scale only after format reliability improves.

Should we copy viral formats exactly?

Copying structure can help; copying identity usually fails. Keep the mechanism, adapt the language and examples to your audience context.

What is the best diagnostic cadence?

Use a weekly deep review with a short mid-week pulse check. That rhythm balances speed with enough data stability for reliable decisions.

Key Takeaways

Treat leaks as hypothesis input, not as fixed truth.

Build each video around one precise promise and one clear payoff.

Optimize signal bundles, not isolated vanity metrics.

Evaluate in batches with fixed windows and predefined thresholds.

Keep only repeatable patterns and archive what fails.

Final Implementation Checklist

Define one audience problem and one promised result per clip.

Design opening architecture for immediate relevance.

Place at least one proof moment before mid-video drop-off.

Track retention + depth signals together.

Run controlled variant tests and decide by repeated outcomes.

Update templates weekly so quality compounds over time.