TL;DR

No, you usually do not need to label every piece of content written with ChatGPT.

Disclosure becomes more important when omission could mislead people, when endorsement rules apply, or when a platform requires labels for realistic AI-generated media.

The biggest operational mistake is treating AI-assisted text and realistic synthetic media as if they were the same category.

A risk-based publishing policy works better than a blanket AI disclaimer on every post.

Human review, factual verification, and platform checks matter more than a generic “written by AI” badge.

AI disclaimer decision checklist

If you are deciding whether to label content written by ChatGPT, separate ordinary AI-assisted text from content that could mislead the audience. A blanket disclaimer on every caption is often less useful than a risk-based publishing rule.

Low-risk AI-assisted text: drafts, captions, outlines, email copy, or edits that a human reviews and owns.

Higher-risk synthetic media: realistic images, voice, video, or impersonation-like content that could make people believe something happened.

Disclosure-sensitive cases: endorsements, regulated claims, political content, health or financial advice, testimonials, and platform-specific AI labels.

Workflow rule: document who checked facts, policy requirements, and platform labels before publishing.

Quick Definition

AI content labeling means telling people that artificial intelligence helped create, alter, or generate content. But that phrase covers very different situations: a marketer tightening a caption with ChatGPT, a team publishing a fully AI-drafted article, or a creator uploading a realistic AI-generated video. Those cases are not governed by the same rules.

Do you need to label ChatGPT-written content?

Usually, no. There is no universal rule that every ChatGPT-written blog post, caption, or newsletter must carry a public label just because AI helped draft it.

The better question is this: would the audience be misled without disclosure, does the platform require a label for this format, or is the content presenting synthetic realism, endorsement, or personal authenticity in a way that changes how people judge it? If yes, disclosure moves from optional to wise, and sometimes from wise to required.

A simple example shows the difference. If your team uses ChatGPT to tighten a LinkedIn post and a human editor verifies the claims, that usually does not trigger a special label by default. But if you publish an AI-generated customer review that looks like a real person’s lived experience, the problem is no longer efficiency. The problem is deception.

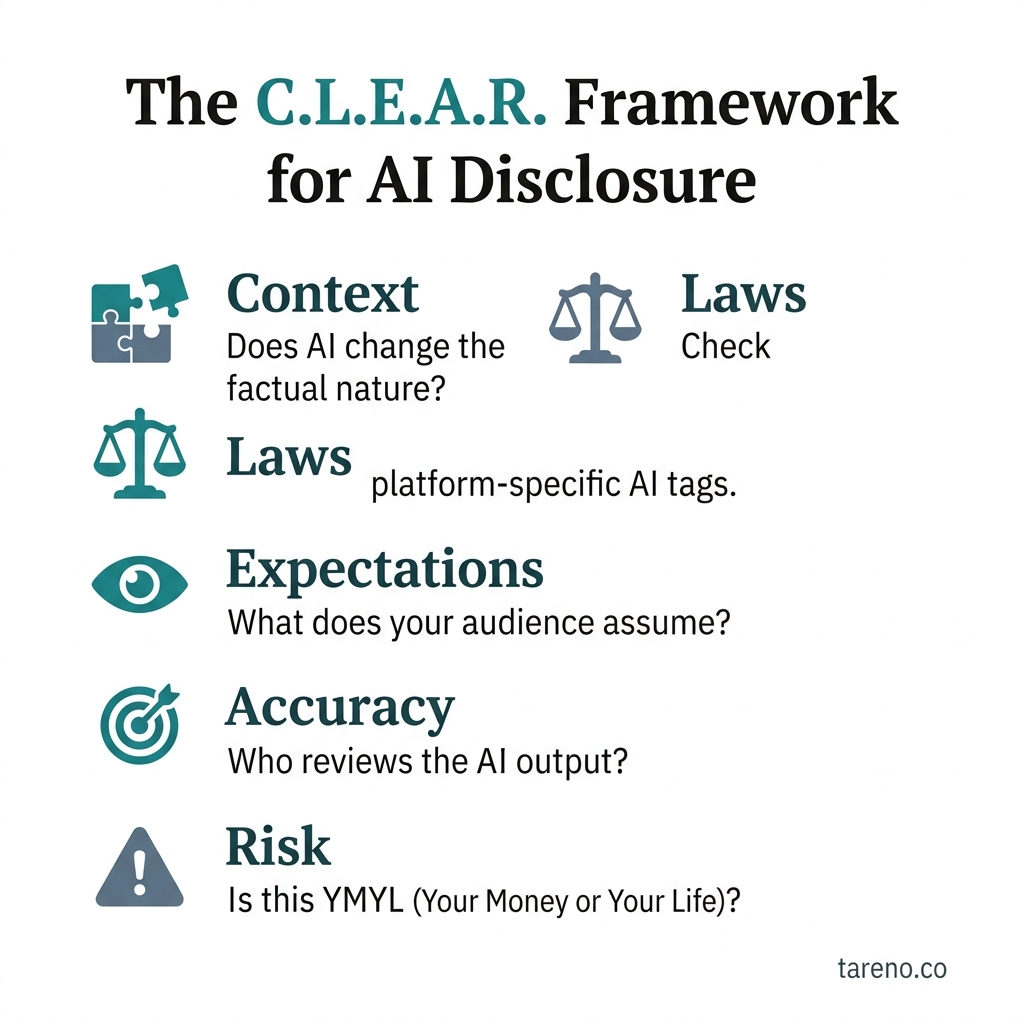

The C.L.E.A.R. Framework for AI Disclosure

The C.L.E.A.R. framework for AI disclosure

To avoid both panic and complacency, use the C.L.E.A.R. framework:

C — Consumer expectation: Would a reasonable person care that AI created or materially altered this?

L — Legal or compliance trigger: Is there a disclosure rule tied to endorsements, regulated claims, or deceptive presentation?

E — Editing vs. generation: Was AI only assisting with drafting, or did it create the core expression or realistic media?

A — Authenticity claim: Does the content imply first-hand experience, human identity, or documentary reality?

R — Rule of the platform: Does the channel require a disclosure tool or label for this format?

This framework is practical because it matches how real content teams work. Instead of arguing about AI in the abstract, you evaluate the actual asset in front of you.

When you usually do NOT need a label

Most teams use AI somewhere in the workflow: outline generation, headline variants, grammar cleanup, summary rewrites, or caption alternatives. In those ordinary cases, a public label is usually unnecessary when all of the following are true:

a human editor reviews the final copy,

factual claims are checked,

the piece is not pretending to be a personal testimony,

the platform does not require a disclosure for that format.

For example, imagine a social team asks ChatGPT to turn product notes into three caption options for a launch. A marketer picks one, edits it, corrects a feature detail, and publishes it. That is an AI-assisted editorial workflow, not a synthetic-media transparency issue. In other words, Instagram SEO signals still matter even when AI helps draft the caption, but that does not mean every edited caption needs a public AI badge.

The same logic applies to many routine blog workflows. If AI helps restructure a draft, improve readability, or summarize research, the more important question is whether the final piece is accurate and responsibly edited. Tools do not remove editorial accountability.

When you probably SHOULD label or disclose

Disclosure matters much more when AI changes how the audience interprets authorship, reality, or trust.

High-risk cases include:

testimonials and reviews that appear to come from real customers,

influencer or sponsored content where disclosure rules already apply,

realistic AI-generated audio, image, or video that a platform expects to be labeled,

first-person or expert claims that imply lived experience or real identity.

Take a simple scenario. A brand publishes a founder video with an AI-generated voice that sounds like the founder, or a fake “customer reaction” clip built from synthetic faces. In that case, the audience is evaluating not just the words but the authenticity of the person behind them. That is where disclosure expectations rise fast.

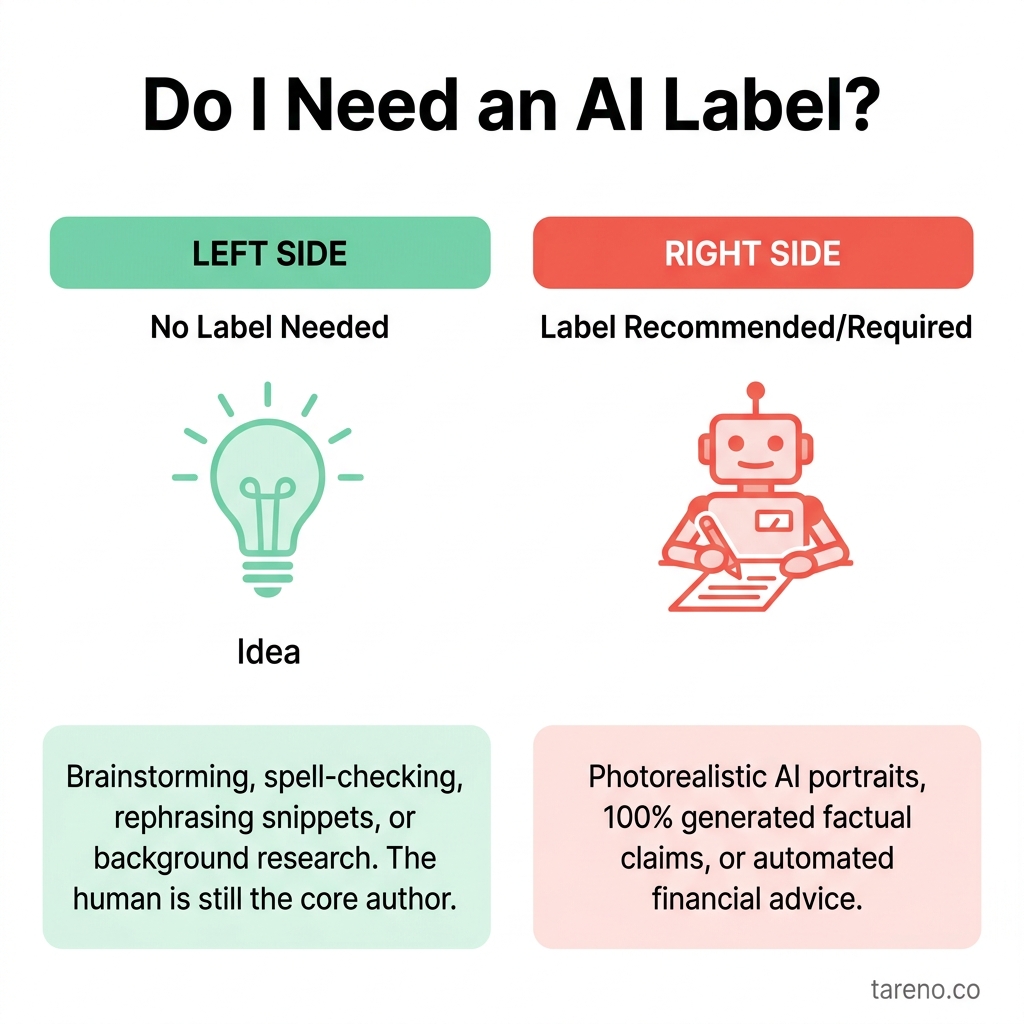

Do I Need an AI Label?

Comparison: not every AI use carries the same disclosure burden

Content typePublic label usually needed?Main riskExtra review needed?AI-assisted text drafting, then human editedUsually nofactual errors, weak oversightYes, editorial reviewFully AI-written article published as brand contentSometimestrust, authorship expectationsYes, policy decisionAI-generated testimonial or personal storyUsually yes / often avoid entirelydeceptionYes, legal + editorial reviewRealistic AI-generated or AI-altered image/audio/videoOften yes depending on platformsynthetic realism, manipulationYes, platform + policy reviewSponsored content using AI plus a material connectionSponsorship disclosure already required; AI may add transparency valuemisleading endorsementYes, compliance review

This comparison matters because many teams overreact to the wrong risk. AI-assisted text is usually a workflow issue. Realistic synthetic media is usually a transparency issue. Treating them as identical leads to sloppy policy.

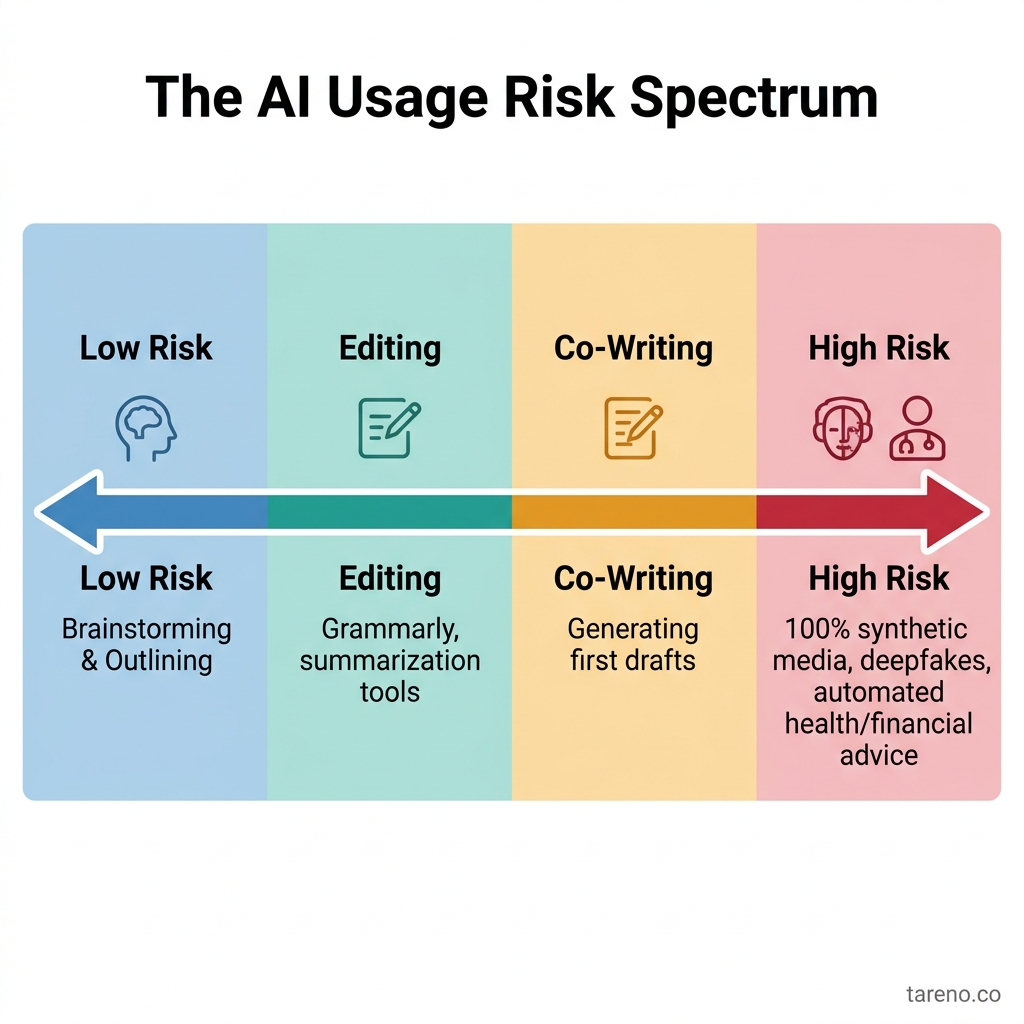

The AI Usage Risk Spectrum

What the major rule sources actually suggest

FTC: disclosure is about misleading omission, not the mere use of a tool

The FTC’s endorsement guidance focuses on material connections and whether people would evaluate content differently if they knew something important had been omitted. That matters most in testimonials, endorsements, and sponsored situations. Using ChatGPT to draft standard marketing copy does not automatically create a stand-alone FTC label requirement.

EU AI Act: transparency is more specific than “label every AI sentence”

The transparency discussion in Article 50 is aimed at specific AI contexts and outputs, especially where people need to know they are interacting with AI or seeing synthetic or deepfake-like content. It should not be read as a blanket rule for every AI-assisted paragraph in a newsletter.

YouTube, Meta, and TikTok: realistic AI media is where labels become more concrete

Public platform guidance from YouTube, Meta, and TikTok is much more explicit about realistic altered or synthetic media than about ordinary AI-assisted text. If you create a realistic AI-generated clip, image, or voice, disclosure expectations rise. If you use ChatGPT to polish a caption, the issue is usually quality control, not a platform label. That is also why platform rules differ, so teams should not publish with a one-size-fits-all assumption.

A practical workflow for teams

A durable policy does not begin with “Did we use AI at all?” It begins with “What exactly are we publishing, and what would omission hide?”

Use this workflow:

Classify the asset. Is it text, image, audio, video, or mixed media?

Assess realism. Does it depict a real person, real event, or first-hand experience?

Check the claim type. Is it editorial copy, an endorsement, a testimonial, or regulated advice?

Check the platform rule. Does the destination channel require a built-in AI or altered-content disclosure?

Decide the disclosure level. None, contextual note, or platform-native label plus explicit text.

Record the decision internally. Keep a lightweight log so the team stays consistent.

Publish with review. Make sure a human approves factual claims and the disclosure choice.

If your team publishes across multiple platforms, it helps to make the disclosure decision part of the publishing workflow instead of a last-minute memory test. A tool like Tareno’s Post Composer (AI Tag) can serve as an internal checkpoint: editors can flag posts that involve AI-sensitive formats, confirm whether a platform-native label is needed, and keep that decision consistent across channels. In practice, operational governance matters more than tool hype.

When disclosure helps even if it is not legally required

Sometimes disclosure is strategically smart even when it is not clearly required.

That is especially true when:

the piece is framed as a personal reflection,

the brand sells trust, expertise, or ethics,

the audience is likely to care about process transparency,

the sector is sensitive, such as finance, healthcare, or public affairs.

For instance, if a named executive publishes a thought-leadership essay that was heavily drafted by AI, a short editorial note may strengthen trust rather than weaken it. The key is proportion. A small, honest note can clarify process without turning the article into a legal memo.

When to use disclosure — and when not to

A simple way to apply the framework is to sort posts into three lanes.

Lane 1: No public label needed

Use this lane for ordinary AI-assisted work where a human still owns the output.

headline brainstorming

caption rewrites

grammar cleanup

outline generation

article summarization followed by fact-checking

Mini-example: a content manager uses ChatGPT to produce five hooks for a webinar recap, edits the final version, checks the date and offer details, and publishes. The meaningful signal to the audience is the value of the post, not the drafting tool.

Lane 2: Optional but wise disclosure

Use this lane when trust context is unusually high even if regulation is less explicit.

personal essays under an executive byline

educational content in sensitive industries

opinion pieces where process transparency supports credibility

customer-facing explainers that describe how advice was prepared

Mini-example: a financial newsletter uses AI to draft a market summary but an analyst materially revises and approves it. A short editorial note may reassure readers without implying the analysis was machine-led.

Lane 3: Review before publish, and likely disclose

Use this lane when authenticity or reality is part of the claim.

customer reviews or testimonial-style posts

AI avatars representing real employees

synthetic voiceovers that sound like identifiable people

altered clips of real events

sponsored content that could confuse viewers about what is real, paid, or personally experienced

Mini-example: a creator posts an AI-generated “customer reaction” Reel that looks like user-generated content. Even if the product benefits are real, the presentation changes how the audience interprets the endorsement.

A lightweight team policy you can actually enforce

Many teams fail because their policy is either too vague (“be transparent”) or too absolute (“label everything”). A better policy is short enough that editors can remember it.

A workable internal rule might look like this:

No label for routine AI-assisted drafting after human review.

Escalate for review if the post contains first-person claims, testimonials, regulated advice, or synthetic likeness.

Use platform-native labels for realistic AI-generated media when the platform expects them.

Add a contextual note when the brand decides that process transparency adds trust.

Do not publish AI-generated testimonials, invented customer stories, or fake documentary-style proof.

This kind of rule is easier to execute because it maps to publishing reality. Editors do not have to debate philosophy on every asset. They only need to classify the content correctly and follow the lane.

Common mistakes teams make

Mistake 1: treating "AI helped" as the whole policy

A tool-centered policy sounds simple, but it fails in practice. It does not distinguish between a harmless grammar pass and a synthetic founder voice. The publish decision should follow audience impact, not only production method.

Mistake 2: assuming a site-wide disclaimer solves everything

A blanket statement such as “some content may be created with AI” can help with general transparency, but it does not replace format-specific decisions. If a realistic synthetic video needs a platform label, a footer note elsewhere is not enough.

Mistake 3: confusing authorship with truthfulness

Some teams worry too much about whether AI touched the draft and too little about whether the final claim is accurate. Readers are usually harmed more by false specifics, invented testimonials, or misleading framing than by invisible drafting assistance.

Mistake 4: hiding behind the platform tool

Built-in disclosure tools are useful, but they are not magic. If the overall post still gives the wrong impression, teams may need clearer context in the post itself.

What a good final review looks like

Before publishing, ask a reviewer to confirm four things:

Accuracy: Are facts, names, dates, and product claims checked?

Authenticity: Does the piece imply human experience or documentary reality?

Disclosure fit: Does this asset need no label, a contextual note, or a platform-native label?

Audience impact: Would the average reader feel misled after learning how the content was made?

This review is especially useful in cross-channel workflows, where the same core asset might be harmless as a blog summary but disclosure-sensitive as a short-form synthetic video.

When NOT to over-disclose

Over-disclosure creates its own problem: label fatigue. If every routine caption carries a dramatic AI warning, the audience stops learning anything useful from the label. Worse, teams start treating all AI involvement as equally important, which hides the cases where transparency truly matters.

A better standard is proportionality. Use stronger disclosure where AI changes authenticity, realism, or audience interpretation. Use lighter governance where AI simply helps a human editor work faster.

FAQ

Do I legally have to label every ChatGPT blog post?

Usually no. A universal “label every AI-written post” rule is too broad. The need for disclosure depends on deception risk, platform policy, and context.

Does the EU AI Act require labels on all AI text?

No. The transparency discussion is more specific than that and is closely tied to certain AI systems and synthetic or deepfake-like outputs.

Do platform AI labels cover written copy too?

Usually not in the same way. Public guidance is much more focused on realistic altered or synthetic media than on ordinary AI-assisted captions or article drafts.

Should I disclose AI use in sponsored content?

You already need clear sponsorship disclosure where endorsement rules apply. If AI also changes the authenticity of the content, add that to your review.

What about AI-edited transcripts or repurposed content?

Repurposing a transcript into a blog post usually does not need an AI label by default. What matters is whether the result is accurate, reviewed, and not misleading about who said what. That is why repurposing transcripts into articles still requires editorial review.

Can I use a generic site-wide AI disclaimer?

You can, but it is often too blunt to be useful. A targeted policy tied to content type and platform is usually clearer.

Key Takeaways

There is no universal rule requiring a label on every piece of ChatGPT-written text.

Disclosure becomes more important when content affects authenticity, realism, endorsements, or audience trust.

Platform rules are most concrete around realistic AI-generated or AI-altered media, not ordinary AI-assisted copy.

The safest approach is a risk-based workflow with human review, not a blanket yes-or-no rule.

Good governance beats both panic labeling and total opacity.

Quotable Passage

You do not need to confess every use of ChatGPT; you need to disclose the moments when AI changes what the audience thinks is real, personal, or independently trustworthy.

Sources

FTC Endorsement Guides FAQ: https://www.ftc.gov/business-guidance/resources/ftcs-endorsement-guides-what-people-are-asking

EU AI Act Article 50 hub: https://artificialintelligenceact.eu/article/50/

YouTube Help on altered or synthetic content: https://support.google.com/youtube/answer/14328491?hl=en

Meta approach to labeling AI-generated content and manipulated media: https://about.fb.com/news/2024/04/metas-approach-to-labeling-ai-generated-content-and-manipulated-media/

TikTok support on AI-generated content: https://support.tiktok.com/en/using-tiktok/creating-videos/ai-generated-content